Example - Upper Triangular Matrix

This presentation will discuss the following three topics:

The eigenvalue problem has applications many fields, including

Given and nxn matrix A and an nx1 nonzero vector x, the n values of λ for which

are known as the eigenvalues of A. Subtracting λx from both sides of the equation results in

Because x is a solution to the above homogeneous system and because x≠0, the matrix

cannot be invertable and therefore

This last equation is known as the characteristic equation of A and its roots are the eigenvalues of A.

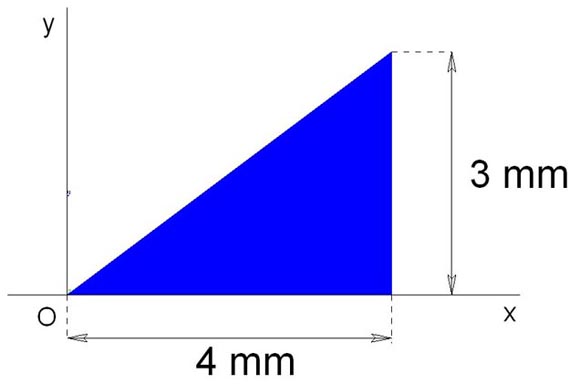

Consider the triangular area shown below.

Given that the moments of Inertia of the area are

The two-dimensional moment of inertia matrix can be written as

so that the eigenvalues are the area's principal momements of inertia. Using det(A-λIn)=0, the characteristic equation is

In polynomial form,

The principal moments of inertia are therefore

Two matrices A and B are similar and have the same eigenvalues if there is an invertible matrix P such that

If a matrix Q is orthogonal, then

(i.e., the transpose of Q is the inverse of Q)

A triangular matrix R can come in two flavors. The first is called lower triangular, in which all entries above the main diagonal are zero. The second is called upper triangular, in which all the entries below the main diagonal are zero.

Triangular matricies have a lot of interesting properties. For example, the transpose of a lower triangular matrix is an upper triangular matrix and vice versa. Also, the diaganal entries of a triangular matrix R are the eigenvalues of R.

Example - Upper Triangular Matrix

The eigenvalues of R are c1, c2, and c3.

Starting with an n x n symmetric matrix A1, the QR factorization technique begins by factoring or decomposing A1 into an orthogonal matrix Q1 and an upper right triangular matrix R1 to obtain

Multiplying R1 from the right by Q1 results in a second matrix.

Substituting gives

More generally,

The matrix A has eigenvalues of 0, 1, 2, and 3

Result of 1 iteration:

Result of 2 iterations:

Result of 8 iterations:

After 8 iterations, all but two zeros have emerged completely and one eigenvalue has been computed to full accuracy.